Meta announced a major update to its open-source Immersive Web SDK (IWSDK) framework, which lets developers build VR experiences on the web using WebXR—now including an “agentic workflow” powered by AI coding assistants which aims to reduce

Originally launched at Meta Connect last year, IWSDK aimed to simplify VR development tasks like physics, hand-tracking, movement, grab interactions, and spatial UI, something Meta says allows creators can focus on ideas instead of low-level engineering.

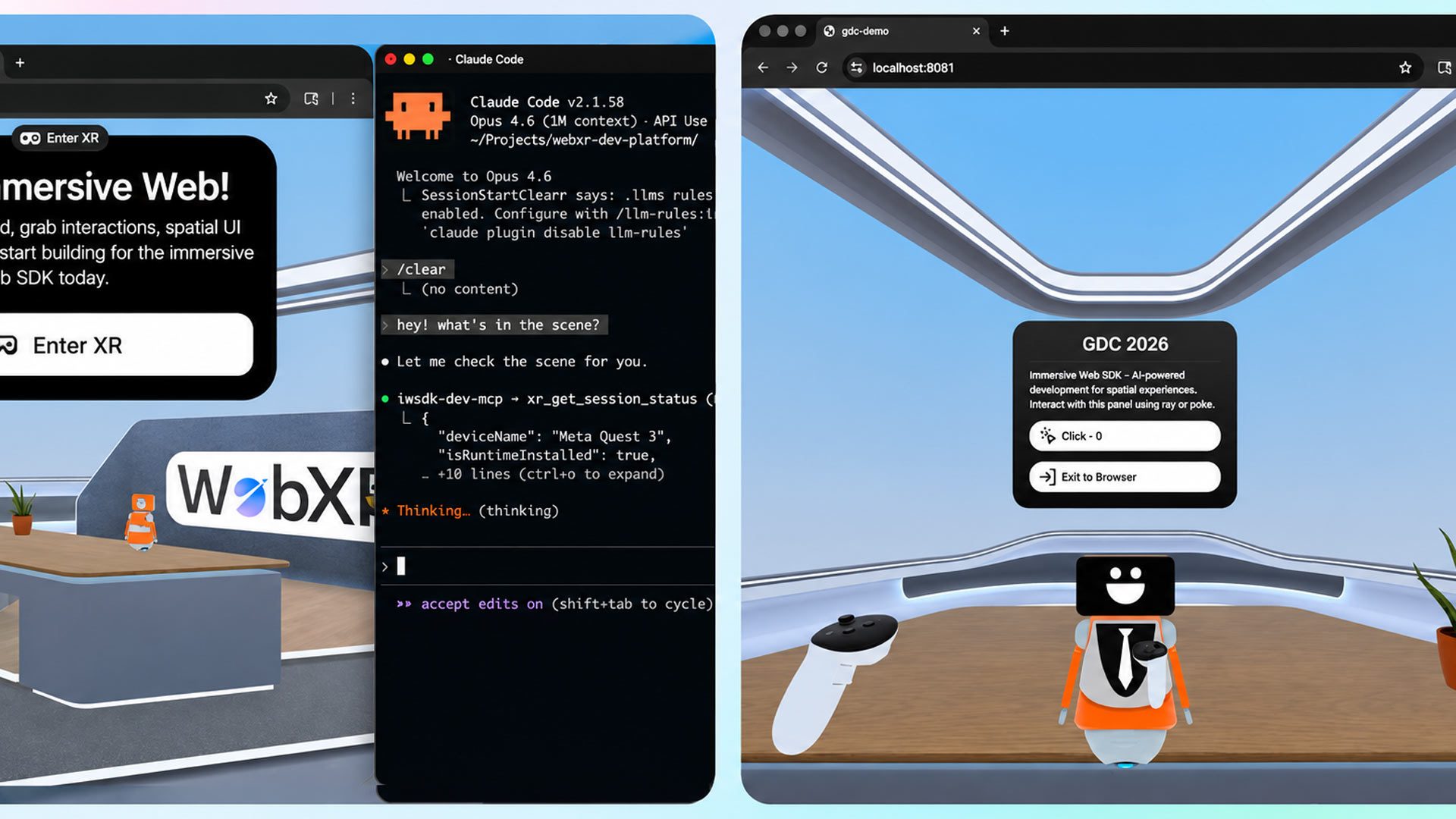

The new addition, which Meta announced in a developer blog post, now includes an “agentic workflow” powered by AI coding assistants such as Claude Code, Cursor, GitHub Copilot, and Codex.

“In practice, agentic workflows mean the AI does more than generate code; it also tests and validates it. This closed-loop system is essential for high-quality, reliable results. IWSDK’s AI integration closes this loop entirely, offering developers maximum productivity,” Meta says.

To demonstrate the system, Meta rebuilt its 2022 VR gardening demo ‘Project Flowerbed‘, previously made up of tens of thousands of lines of custom code. Using IWSDK’s AI workflow and existing art assets, the entire application was recreated in only 15 hours, the company says, noting the tool isn’t about “fixing a typo or generating boilerplate. It’s a full, interactive VR experience for web, rebuilt by AI using IWSDK.”

Meta’s main reasoning behind its latest (and certainly not last) injection of AI is mainly centered around ease of deployment. Web-based VR can be tested instantly in a browser without lengthy compile times, and can also be deployed across desktop and VR headsets via a simple URL, bypassing app stores and downloads. Notably, the company says over one million monthly users already access WebXR content on Quest.

If you’re looking to learn more, or explore Meta’s new AI workflow, check out IWSDK here. You can also find the open-source project (under MIT licensing) over on GitHub.

,