Meta announced it’s tapped six external teams to receive a research grant in order to advance work on its surface electromyography (sEMG) based wristband controller.

Meta revealed in a blog post it’s launched a research funding initiative focused on improving how users learn and interact with sEMG systems, having chosen six universities out of 70 global submissions.

Each research group is set to receive $150,000 in funding, which includes teams at the University of Central Florida, University of South Florida, University of California, Davis, Newcastle University, University of British Columbia, and Northwestern University.

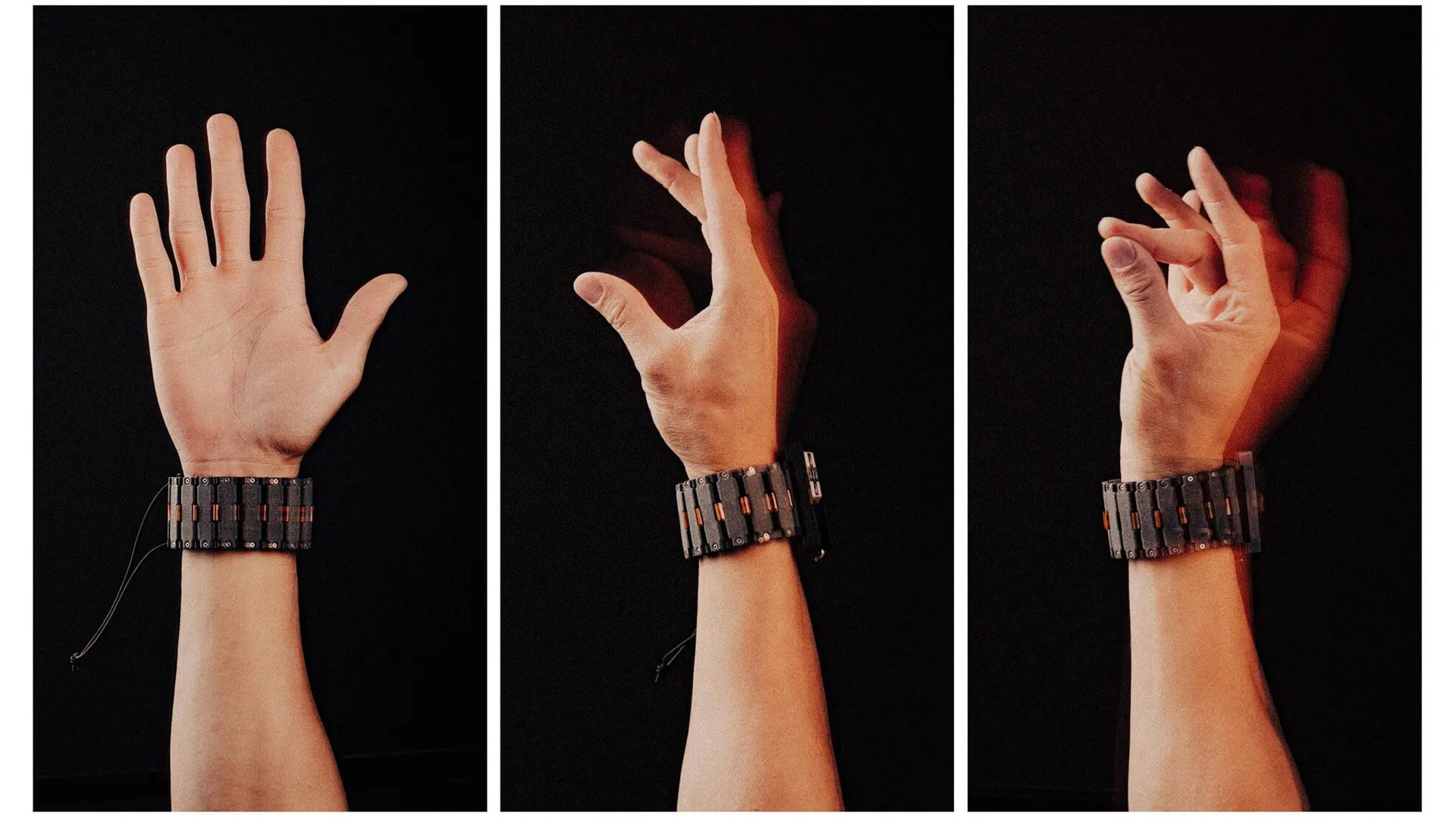

Meta’s wrist-worn neural interface relies on sEMG, which detects electrical activity in the wrist and hand and translates it into digital commands. As Meta Ray-Ban Display’s main input device, the company hopes to answer a few questions with the studies, namely: how do people learn new sEMG-based controls, and how can onboarding be streamlined?

The funded projects explore a range of challenges. Some focus on improving learning methods, such as comparing gamified training with step-by-step instruction, or developing systems that adapt to individual users over time.

Others aim to expand what sEMG can do, like enabling silent speech generation by translating muscle signals into synthesized voice, or increasing the ‘bandwidth’ of communication so users can issue more complex commands without disrupting natural hand movement.

A number of the proposed research topics include assistive applications, such as helping stroke survivors regain muscle control, or improving prosthetic limb operation through co-adaptive systems that learn alongside the user. You can see more about each study here.

This follows the release of Meta Ray-Ban Display last September, the company’s first pair of smart glasses with a built-in display. Priced at $800 and only available in the US for now, the smart glasses make use of the same input scheme first paired with Meta’s Orion AR prototype, which was revealed in late 2024.

This ostensibly shows Meta is pretty confident in the control scheme, viewing it as reliable enough for future (likely AR) devices. We’re looking forward to learning more as the research projects progress. Typically, we see papers either highlighted or released during SIGGRAPH, which is taking place in Los Angeles, California this year on July 19th – 23rd.

,